In today’s rapidly evolving AI landscape, large language models are becoming a key driver of industry change. As two of the most-talked-about advanced models, Grok 4 vs Claude Opus 4.1 not only demonstrate exceptional capabilities in crucial areas like reasoning, programming, and mathematics, but they also present a stark contrast in their pricing strategies and real-world applications. AGIYes will conduct an in-depth analysis of these two models from multiple angles—including performance, price, and features—to help you gain a more comprehensive understanding of their strengths and applicable use cases.

I. Grok 4 vs Claude Opus 4.1: Performance Comparison

01. Reasoning Capability: Grok 4’s Multi-Agent Architecture Takes the Lead, Claude 4.1 Focuses on Deep Thinking

In terms of reasoning, Grok 4 employs an innovative multi-agent architecture, where multiple AI agents can analyze problems in parallel, significantly boosting accuracy for complex tasks. This design allowed it to achieve a score of 15.9% on the ARC-AGI-2 test, far surpassing Claude 4.1 Opus’s 8.6%.

Grok 4 also performed exceptionally well on the Vending Bench, which measures the ability to solve complex tasks, with a net value twice that of Claude 4.1. This indicates that its decision-making capabilities in a simulated business environment are superior, allowing it to better handle real-world problems that require multi-step reasoning.

Claude Opus 4.1, on the other hand, adopted a different technical approach: Extended Thinking. When faced with a complex problem, it can access a “thinking space” of up to 64,000 tokens to first plan steps, analyze pros and cons, and self-correct before providing a precise answer.

This method makes Claude 4.1 more stable and reliable for tasks requiring deep reasoning, and it demonstrates stronger capabilities in detail tracking and agent-based search.

02. AGI Capability: Grok 4 Leads in General AI Tests

On the ARC-AGI v2 test set, which measures general artificial intelligence, Grok 4 became the first model to break the 10% threshold, with a score as high as 15.9%, more than double that of Claude Opus 4.1.

This test is widely recognized as the “ceiling” for measuring a model’s reasoning ability, and Grok 4’s breakthrough performance signals a significant step forward in the field of general AI.

Elon Musk even proclaimed: “Grok 4 is smarter than human graduate students in almost all subjects.” This confidence stems from Grok 4’s excellent performance across multiple dimensions, especially on tasks requiring superhuman-level reasoning.

While Claude Opus 4.1 is slightly weaker on some purely logical reasoning tests, it has shown more robust performance in real-world applications.

It focuses on enterprise-grade safety and compliance, achieving a harmless response rate of 98.76%, which makes it more reliable in business environments.

03. Code Generation and Repair: Claude 4.1 Is More Precise, Grok 4 Is Faster

In the competitive field of code generation and repair, Claude Opus 4.1 demonstrates a clear advantage over Grok 4. On the SWE-bench Verified test—the gold standard for measuring AI models’ ability to fix real-world code bugs—Claude Opus 4.1 achieved an outstanding score of 74.5%, significantly outperforming Grok 4 and reinforcing its leadership in automated code repair capabilities.

This test is not a hypothetical multiple-choice exam; it requires the AI to directly face programming problems pulled from real GitHub repositories and fix the errors like a human engineer.

Claude 4.1’s multi-file code refactoring ability is particularly outstanding. Test results from Rakuten Group show that it can accurately identify and fix errors in large codebases while avoiding the introduction of new issues.

This makes it a preferred tool for enterprise-level code maintenance. One developer commented: “The experience of using the Claude Code tool to call Opus 4.1 is far superior to using third-party tools like GitHub Copilot.”

Grok 4 also performs well in code generation. While its specific SWE-bench score has not been disclosed, it holds an advantage in real-time code execution and multi-language debugging.

Musk has claimed that Grok 4 can analyze and fix entire source code files, providing a better user experience than Cursor. It’s especially worth noting that Grok 4 is more seamless in programming automation, thanks to its native integration design for tool usage.

04. Mathematical Reasoning: Grok 4 Is Nearly Perfect, Claude 4.1 Is Steadily Improving

Mathematical reasoning is a crucial indicator of an AI model’s logical thinking. In this area, Grok 4 performs exceptionally well, achieving a perfect score on the AIME25 math competition.

This is the ultimate test of a model’s logical reasoning and mathematical ability, demonstrating Grok 4’s excellence in solving complex math problems.

Grok 4 has also dominated top-tier math competitions like the Harvard-MIT Math Tournament (HMMT) and the USA Mathematical Olympiad (USAMO), showcasing a broad advantage in mathematical reasoning. These results prove Grok 4’s capability in handling tasks that require a high degree of abstraction and logical thinking.

Although Claude Opus 4.1 has not released specific math test scores, its overall performance improvements suggest a solid capability in mathematical reasoning.

Especially on multi-step math problems, Claude 4.1’s Extended Thinking ability allows it to better plan its solution path and solve complex problems step-by-step.

05. Scientific Reasoning: Grok 4 at a Doctoral Level, Claude 4.1 as a Research Assistant

In the field of scientific reasoning, Grok 4 has demonstrated a true “doctoral-level” standard. On the GPQA (graduate-level scientific questions) test, Grok 4 achieved near-perfect performance, easily handling graduate-level scientific problems.

Musk even predicted: “Grok 4 may discover new usable technologies by the end of this year and new physics next year.”

Grok 4 also has excellent applications in the biomedical field. It has been reported that the ARC Institute in Palo Alto is using Grok 4 to automate CRISPR research workflows, “screening millions of experiment logs in seconds to find the best hypotheses.”

Furthermore, Grok 4 received the highest rating in chest X-ray evaluations, demonstrating its potential for applications in specialized medical fields.

Claude Opus 4.1, on the other hand, focuses more on research assistance features in scientific reasoning. Its multi-turn, agent-based search capability allows researchers to more efficiently organize scientific literature and analyze experimental data.

When faced with tasks like “research progress in a certain field over the past five years,” Claude 4.1 will proactively ask for specific details, then conduct searches in multiple turns, listing the research progress from different stages one by one and providing a table of key differences.

From a practical application perspective, the choice depends on your specific needs. If you need to solve complex engineering problems and conduct scientific reasoning, Grok 4 is likely a better choice.

If you are more concerned with precise code repair and enterprise-grade applications, Claude Opus 4.1 has a greater advantage.

In the coming weeks, Anthropic has also promised to release “significant improvements” to its models, while xAI plans to release an AI programming model and a multimodal agent. This AI race has just begun, and the ultimate beneficiaries will be the entire tech industry and its users.

II. Grok 4 vs Claude Opus 4.1: Price Comparison

01. Pricing Strategy: Grok’s High-End Aggressiveness, Claude’s Premium Value

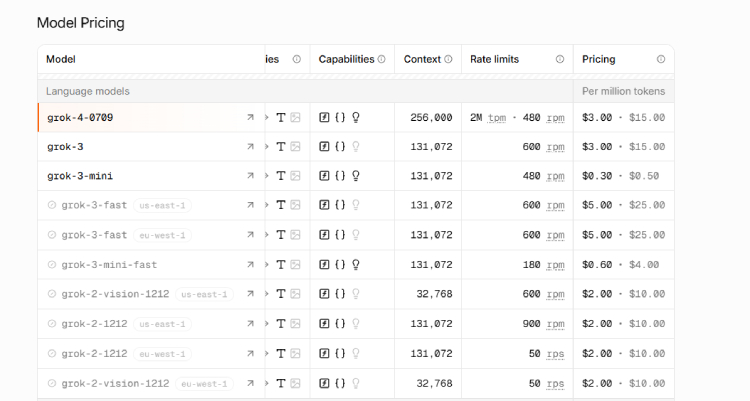

Grok 4’s pricing strategy reflects Musk’s consistent high-end brand positioning. According to official data from xAI, the API pricing for Grok 4 is $3 per million input tokens and $15 per million output tokens.

This pricing is notably higher than the market average, but what’s truly eye-catching is its SuperGrok Heavy plan, with a monthly fee of $300 (approximately 2,000 RMB). This price even exceeds OpenAI’s most expensive $200 Pro member monthly fee.

Musk is employing a classic high-price strategy, aiming to reinforce Grok 4’s market perception as a “technological leader.” By setting a high-priced flagship product, xAI not only enhances its overall brand image but also provides a price reference for potential mid-to-low-end product lines.

This strategy is not uncommon in the tech industry; companies like Apple and Adobe have successfully used similar methods to build a high-end image.

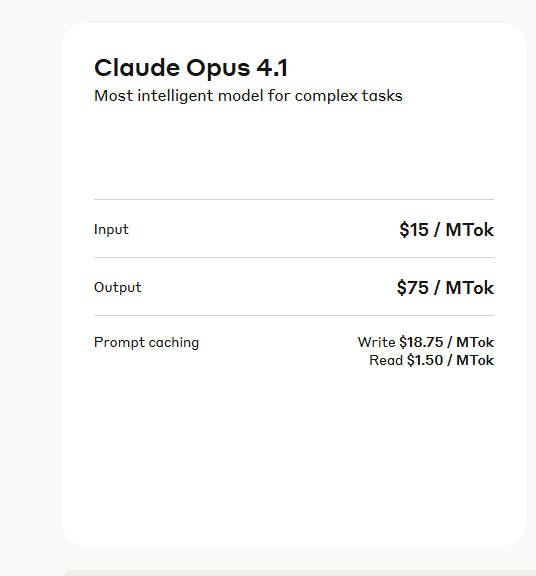

Claude Opus 4.1 has adopted a different pricing approach. Anthropic’s API price for Opus 4.1 is $15 per million input tokens and $75 per million output tokens.

In terms of absolute numbers, this price is higher than Grok 4, but it’s important to consider that Anthropic offers more cost-optimization options. Users can save up to 90% on costs by enabling prompt caching and up to 50% by using batch processing.

Anthropic’s pricing strategy reflects its market positioning, which is focused on serving enterprise users and high-value scenarios. Opus 4.1 is not a general-purpose tool designed for everyone; rather, it is a “precision instrument” tailored for mission-critical and high-return tasks.

This positioning makes its high cost worthwhile in enterprise-level applications, especially for tasks that demand extreme precision and logic.

02. Price-Performance Comparison: Different Scenarios, Different Values

In terms of price-performance evaluation, both models have their strengths, and their actual value is highly dependent on the use case and application needs.

- Enterprise Application ValueFor large enterprises, Claude Opus 4.1 demonstrates significant value in handling complex tasks. Rakuten Group’s test results show that Opus 4.1 can accurately pinpoint the parts of a large codebase that need modification without making unnecessary changes or introducing new bugs.

This precision makes development teams more willing to use it for daily debugging tasks, thereby improving overall development efficiency.

In enterprise user evaluations, the performance leap from Opus 4 to Opus 4.1 is significant, roughly equivalent to the upgrade from Sonnet 3.7 to Sonnet 4. This level of performance improvement can mean a substantial return on investment for companies that rely on AI for core business processes.

- Research and Development ValueIn the fields of research and code development, Grok 4 shows unique advantages. Its multi-agent architecture allows different sub-models to verify hypotheses in parallel, and it’s already being piloted at several U.S. universities for materials computation.

The integrated Grok 4 Code mode supports whole-file bug fixes and cross-language migration, with xAI even claiming it’s “better than Cursor.”

- Cost Control ComparisonIn terms of cost control, Claude Opus 4.1 offers more flexible options. Developers can adjust the “thinking budget” parameter to find the optimal balance between cost and performance.

This fine-grained control is especially important for projects with strict budget constraints, allowing users to allocate different computational resources based on task importance.

While Grok 4 has a higher overall price, its multi-agent architecture may reduce overall computation time in some scenarios, thereby indirectly lowering costs. This is particularly true for complex problems that require multi-faceted analysis, where four agents working simultaneously might be more efficient than a single agent making multiple calls.

Summary:

In terms of pricing strategy, the Grok 4 vs Claude Opus 4.1 comparison reveals distinct approaches: Grok 4 adopts an aggressive, high-end pricing model to reinforce its image as a “technological leader,” while Claude Opus 4.1, despite having a higher unit price, provides more flexible cost control through advanced caching and batch processing optimizations—making it better suited for the practical needs of enterprise users.

Grok 4 and Claude Opus 4.1 represent two different development paths for current large language models. Grok 4, with its multi-agent architecture, has achieved groundbreaking results in math, scientific reasoning, and general AI tests, making it more suitable for research, complex engineering, and frontier exploration. Claude Opus 4.1, with its Extended Thinking technology, is stable and reliable in code generation, enterprise-level applications, and safety compliance, with a particular advantage in large codebase repair and commercial scenarios.

Overall, Grok 4 leans toward research and innovation, while Claude Opus 4.1 is more focused on enterprise adoption and high-value applications. The user’s choice should be based on their specific scenarios and budget: if you are pursuing cutting-edge intelligence breakthroughs, Grok 4 is more appealing; if you prioritize stability, code precision, and business reliability, Claude Opus 4.1 is the better solution.