Microsoft Research has officially unveiled VibeVoice-1.5B, a groundbreaking open-source audio model designed to transform speech synthesis capabilities.

This release introduces substantial improvements in generating natural, high-quality, and extended synthetic speech—paving the way for more dynamic voice AI applications.

Keep reading, let’s delve into what makes VibeVoice-1.5B model a game-changer.

What is VibeVoice-1.5B?

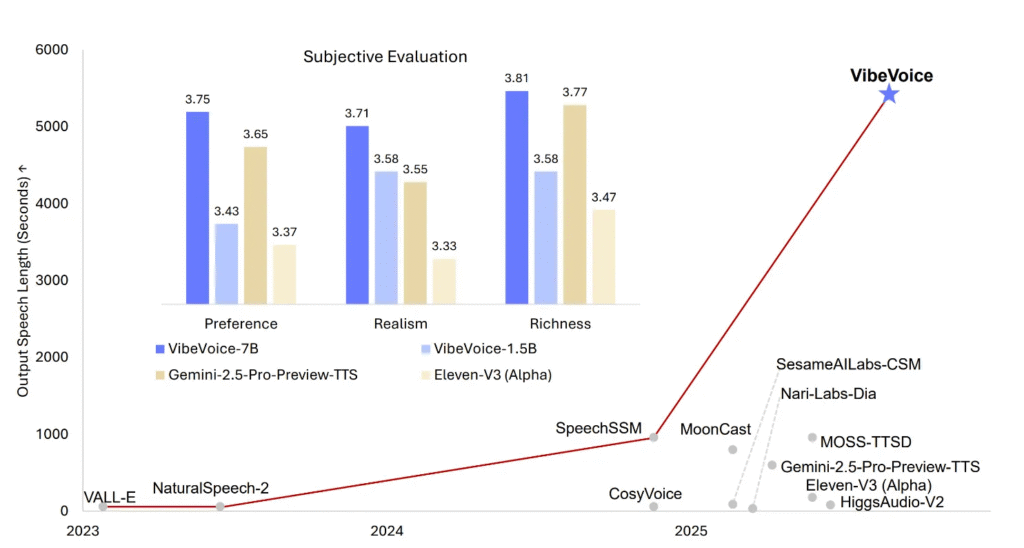

VibeVoice-1.5B is a state-of-the-art text-to-speech (TTS) model capable of producing up to 90 minutes of continuous, high-fidelity speech in a single inference. This represents a major departure from earlier systems, which often struggled to maintain consistent voice tone and semantic coherence beyond 30 minutes.

In addition to its long-form capabilities, the model supports up to four distinct speakers within the same audio generation session. This is a notable upgrade from conventional open-source TTS systems, which typically accommodate only one or two voices. Furthermore, VibeVoice-1.5B incorporates an extreme compression mechanism, condensing raw 24kHz audio by a factor of 3,200 without sacrificing clarity.

The Core of the VibeVoice Model

At the heart of VibeVoice-1.5B is a novel dual-tokenizer framework that sets it apart from conventional speech synthesis models. While traditional TTS systems rely on a single tokenizer for feature extraction, Microsoft’s new model employs two specialized tokenizers working in tandem:

- The acoustic tokenizer captures and preserves speaker-specific vocal qualities while enabling ultra-high compression rates.

- The semantic tokenizer focuses on contextual and emotional features embedded in the text, ensuring the synthesized speech conveys appropriate meaning, tone, and expressiveness.

This dual approach effectively mitigates common synthesis flaws such as timbre instability and semantic misalignment, resulting in more fluid and human-like audio output.

Key Features of VibeVoice-1.5B

VibeVoice-1.5B brings several industry-leading features that broaden the scope of AI-generated speech:

- Extended Context and Multi-Speaker Handling: The model can generate long-duration audio (up to 90 minutes) with support for four unique voices, making it ideal for audiobooks, podcasts, and multi-character narratives.

- Parallel and Turn-Taking Synthesis: Unlike systems that concatenate pre-recorded clips, VibeVoice-1.5B natively simulates conversational dynamics, allowing multiple speakers to interact in a natural, sequential manner.

- Cross-Lingual and Singing Capabilities: Although primarily trained on English and Chinese datasets, the model demonstrates promising cross-lingual synthesis abilities and can even generate singing—a rare feature among open-source TTS offerings.

- Open Licensing: Released under the permissive MIT License, the model is freely available for both academic and commercial use, encouraging innovation and reproducibility.

- Emotion and Expressiveness Control: Fine-grained control over vocal emotion makes this model particularly useful in conversational AI, entertainment, and educational content.

- Streaming-Ready Architecture: While the current 1.5B version focuses on long-form synthesis, its design anticipates a future 7B parameter model optimized for real-time streaming applications.

VibeVoice-1.5B Model Limitations

Despite its advanced capabilities, VibeVoice-1.5B has certain constraints that users should consider:

- Limited Language Support: The model is optimized only for English and Chinese. Usage with other languages may lead to reduced intelligibility or unintended output.

- No Overlapping Speech: It does not support overlapping dialogue or interruptions between speakers, limiting its use in highly dynamic conversational settings.

- Audio-Only Output: The model generates speech without background music, sound effects, or ambient noise.

- Ethical and Legal Considerations: Microsoft explicitly prohibits misuse of the model for voice impersonation, disinformation, or bypassing authentication systems. Compliance with applicable laws and clear disclosure of AI-generated content is mandatory.

- Non-Real-Time Use: This iteration is not designed for low-latency applications such as live interaction or streaming. Users requiring real-time synthesis should await the upcoming 7B variant.

Who is VibeVoice-1.5B For?

VibeVoice-1.5B is an essential tool for AI researchers, content creators, developers, and enterprises exploring next-generation voice synthesis.

Its open-source nature and scalable architecture make it particularly appealing for prototyping long-form audio projects, voice-enabled applications, and multi-speaker narratives.

The model is now accessible on Hugging Face and GitHub, accompanied by comprehensive documentation and usage guidelines.

Conclusion on VibeVoice-1.5B Model

Microsoft’s release of VibeVoice-1.5B represents a pivotal moment for open-source speech synthesis. By combining extended duration, multi-speaker support, emotional nuance, and efficient compression, the model sets a new standard for what’s possible in AI-generated audio.

While it currently focuses on English and Chinese and is intended primarily for research, its architecture hints at a future where real-time, expressive, and ethically transparent TTS is widely accessible.

As synthetic voice technology continues to evolve, VibeVoice-1.5B offers a powerful glimpse into a more interactive and authentic AI audio experience—one that could redefine industries from entertainment to assistive technology.